AI adoption is an organisational transformation, not a technology project.

Most organisations are experimenting with AI. Far fewer are redesigning how work happens. Human–AI Systems brings a structured methodology that connects strategy to operational change — identifying where AI creates value, testing it in real workflows, and scaling what works.

Why most AI programmes stall

Organisations invest in pilots, run experiments and build proof-of-concept prototypes. Many of these work well technically. But they rarely make the transition into how the organisation actually operates.

The problem is not the technology. It is the gap between a successful experiment and a changed operating model. Workflows remain unchanged. Governance is unclear. Managers are not prepared to lead teams where humans and AI work alongside each other.

The result is an ever-growing collection of pilots that never scale — and an organisation that feels busy with AI without seeing material impact.

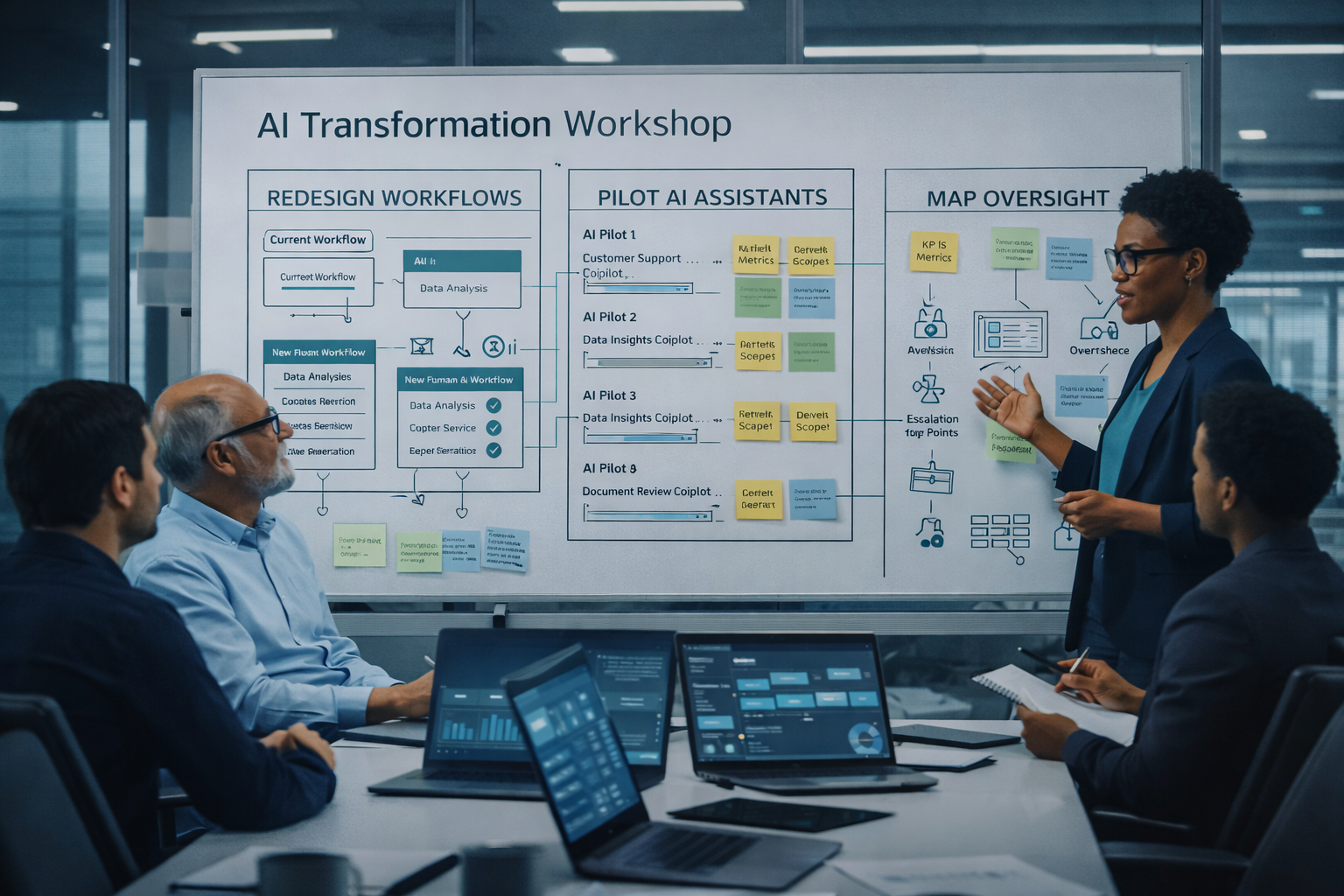

Radar → Pilot → Scale

A structured pathway that takes organisations from opportunity identification through validated testing to operational deployment. Each stage builds the evidence and confidence needed for the next.

Radar

Review workflows, surface opportunities, assess risks and prioritise realistic pilot candidates. The goal is a small number of high-impact opportunities rather than a long list of speculative ideas.

Pilot

Introduce AI into real processes with defined success measures, governance controls and end-user involvement. Not just technical validation — understanding how the workflow needs to change.

Scale

Embed successful patterns into the operating model. Define ownership, governance, performance monitoring and the management practices needed to sustain AI-enabled workflows.

See where AI creates real value

Radar is a diagnostic engagement. It examines operational workflows, customer and citizen interactions, knowledge-intensive processes and decision points — looking for where AI can deliver measurable improvement rather than marginal convenience.

The output is a prioritised opportunity portfolio with value and feasibility assessments, governance considerations and recommended pilot candidates. It gives leadership a clear, evidence-based view of where to focus.

- Workflow and process review across departments

- Opportunity identification and value assessment

- Risk, governance and compliance analysis

- Prioritised pilot candidates with business case

Learn what changes when AI enters a workflow

A structured pilot is not just a technology test. It defines success metrics, builds governance controls, involves end users and examines how the workflow itself needs to adapt.

Pilots produce evidence — not just about whether the AI works, but about how roles, decisions and oversight need to change for the organisation to operate differently.

- Clearly scoped pilot with success metrics

- Governance and compliance controls from day one

- End-user involvement and feedback loops

- Workflow redesign insights and scaling readiness assessment

Move from experiment to operating model

Scaling is where most organisations falter. Successful pilots remain isolated experiments because the organisation has not prepared for the structural changes needed to embed AI into everyday operations.

Scale involves defining operational ownership, embedding AI within workflows, establishing governance oversight, implementing performance monitoring and building the management capability to lead hybrid teams. This is where AI becomes part of how the organisation works — not something it experiments with.

- Operational ownership and accountability structures

- Workflow integration and process redesign

- Governance framework and compliance monitoring

- Performance metrics and continuous improvement

- Capability development for hybrid team leadership

Tool → Assistant → Worker

AI capability inside organisations follows a natural progression. Understanding where you are on this path shapes how you plan adoption, manage risk and prepare your people.

Tool

AI enhances existing software — writing assistance, summarisation, predictive suggestions. Productivity improves, but organisational structure does not significantly change. This is where most organisations are today.

Assistant

AI performs discrete tasks under human direction — drafting reports, analysing datasets, assisting customer support. Humans remain responsible for directing and reviewing work. This is the current focus of most enterprise adoption.

Worker

AI operates inside defined workflows with human oversight rather than constant direction. Automated case triage, document analysis pipelines, operational decision support. This introduces new challenges in supervision, accountability and governance.

The greatest organisational impact occurs at the Worker stage. Managers will increasingly lead hybrid teams — requiring new practices for workflow design, quality oversight and accountability for AI-supported decisions.

Why this matters for your organisation

Each stage of AI capability brings different implications for workflow design, governance and management practice. An organisation deploying AI tools needs different structures from one embedding AI workers into operational processes.

Understanding this progression helps leaders plan adoption realistically — investing in the right governance, capability and change management at each stage rather than trying to leap from experimentation to full automation.

Leading teams where humans and AI work together

As AI moves from tool to worker, organisations need new management practices. Hybrid Workforce Leadership addresses the practical reality of humans and AI operating together inside the same workflows.

Workflow design

Defining which tasks AI performs, where human oversight is required and how work flows between human and AI contributors. Clear workflow design ensures AI augments operations rather than creating confusion.

Governance and accountability

Transparency, data protection, regulatory compliance and auditability of decisions. AI systems must operate within organisational and regulatory boundaries — particularly in regulated sectors.

Capability development

Employees need new skills: supervising AI outputs, understanding AI limitations, managing AI-supported workflows. The shift is from using tools to managing AI-enabled processes.

Continuous adaptation

AI evolves rapidly. Organisations need a continuous learning model that allows workflows, governance and training to evolve alongside technology — not a one-off transformation programme.

Organisational transformation, not technology consulting

Strategy consultancies plan. Technical agencies build. Human–AI Systems bridges the gap — helping organisations translate AI strategy into operational adoption.

The focus is on the organisational dimension: how AI becomes part of the workforce, how it is governed responsibly and how it delivers measurable operational value. This is not about selecting vendors or writing code. It is about redesigning how work happens.

Every engagement is grounded in practical experience of leading AI transformation inside complex organisations — not theoretical frameworks applied from the outside.

Ready to move beyond experimentation?

Most engagements begin with a 30-minute conversation. No agenda beyond understanding your situation and whether there is a genuine fit.